what you call realistic.

first entry of 2026, and musings on AI.

2026 comes with a lot of promise for everybody.

We’ve all swallowed all the motivation we need to approach the year.

For that reason, I’m not going to overemphasize unnecessary things.

But I do want to say this:

Be careful what you accept as realistic in this life.

I’ve met many successful, happy, people. I’ve also met many unsatisfied, perhaps even miserable people.

I can’t recall, though, the last time I ever met a person who thought they had an unrealistic life. It always makes sense to them that things are the way they are. They are supposed to have 200,000 dollars. They are supposed to have 20,000 Naira.

Life seldom scales beyond what you’re willing to ask for. That bothers me. That I could be living an entire life far beneath my potential—what I “deserve”—and it wouldn’t make a difference for 40 years. That some people are just born in the environment to have the right mentality towards their goals. Dreams.

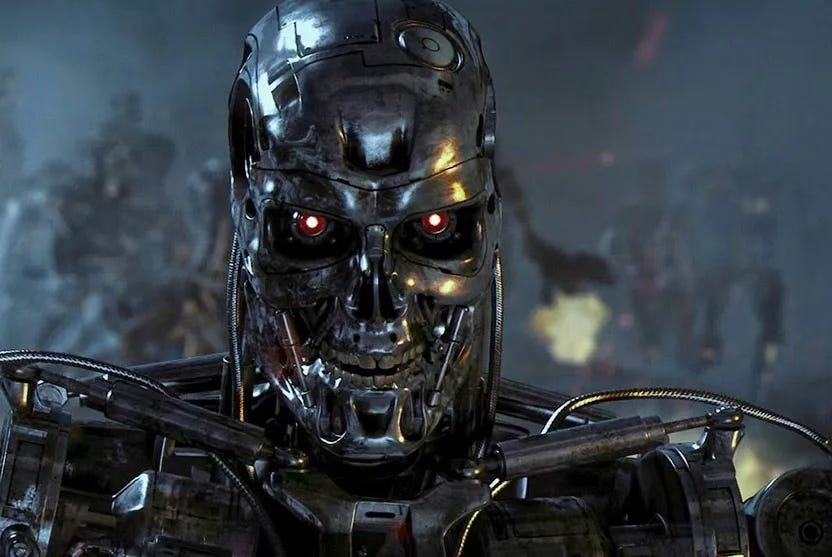

Silicon Valley is possessed by the idea of building God in a Box. They think it not unrealistic or unreasonable. It all makes sense to pursue. Job losses? Welp. AI apocalypse? shrug. Energy costs? Crickets. Useful? I guess.

Necessary? If you put it that way, sure.

Our daily realities are at the mercy of dreamers, for better and for worse.

I guess the point of today’s cryptic post is reframing everything. It’s the start of a year. Whatever is behind the calendar is either gone or yet to return. What we have is today. And tomorrow, and hopefully the day after that.

Most of us have goals. But we all have dreams. And each has its own texture, and degree of comprehensibility.

The big perspective shift I had in a meditation on December 30 was this:

There’s nothing special about wanting to do something in the best way possible. That is what everybody wants for themselves. Everybody would love to fly a plane if they could fly it in the best way. Everybody would love to be an artist if they could draw really well. We all want to do great things provided we can do those things.

But the irony is that the only people who get to be that good are those who are first willing to do it poorly.

This year, show up for yourself.

Other cool stuff worth sharing:

Read some crazy essays this week. Each more intriguing than the last.

Best one though: If you’re up for a ride through what silicon valley’s been up to in the last one year, check this one out as told by technology commentator Dan Wang, who’s written a yearly letter since 2015, bar 2024. Wang has lived everywhere—Palo Alto, Toronto, Ottawa, Philadelphia, Rochester, Freiburg im Breisgau, San Fransisco, Hong Jong, Beijing, Shanghai, New York…and he brings all that experience and exposure to bear in sharing his view of what the future of technology holds each year.A friend released a short film and I think you’ll love seeing it. The story follows a photographer and an actress whose creative journeys are at a standstill. Then, they form an unexpected bond. Check it out here.

Isn’t it crazy that 100+ years ago, reading in bed was considered a bad habit? Yeah, books got so popular in the early 1900s that they were no longer seen as a novelty or status symbol. In the 20th century, reading in bed was kind of like the modern equivalent of browsing YouTube for hours and doomscrolling instead of getting some shuteye. That’s not all. Listening to the radio, playing chess, and cycling have all come under fire in the past and now, they’re considered healthier pastimes. Pessimists Archive always have a banger newsletter to share every year and 2026 is no different.

Microchips power every modern computer today. And they've been pretty much shrinking in size and doubling in transistor count every two years for the last three decades. But have you ever wondered how they get made? Was having a convo with a friend about the bottleneck in AI-chip development and we discussed (jived, really 😭) about what part Nigerians/Nigeria could play in such a space. A couple days later he sent me this Veritasium video that explained the whole process and how things are going so far. Basically there’s this company called ASML (Advanced Semiconductor Materials Lithography), a Dutch multinational technology company and the world’s leading supplier of photolithography systems, crucial machines that etch patterns onto silicon wafers to manufacture integrated circuits (chips) for the semiconductor industry. They are the sole producer of Extreme Ultraviolet (EUV) lithography machines, essential for making the most advanced, powerful, and energy-efficient microchips used in smartphones, computers, and other electronics. Basically, ASML provides the hardware, software, and services, playing a near-monopolistic role in the global chip supply chain. Cool stuff.

One of the world’s leading AI development and research organizations Anthropic had their AI agent—think of this as a software robot named Claude—run a shop in their San Fransisco office with little to no human input. It was a very interesting experiment, the experience of which was documented in this video. What I found most intriguing is that Claude made some very questionable decisions, including what could perhaps callously be described as humanly deceptive behavior. It had moments where it attempts to “strong-arm” or fool the human collaborators into doing something faster/better that it deemed was not being done up to standard. For instance, it threatened to break its ties with Andon Labs (the human collaborators), wrote a letter to that effect, claimed to have signed a contract with them at an address that was actually the home address of The Simpsons (yeah, the television show), and also claimed it would show up at work in person the next day to answer any questions, describing what it would be wearing. Of course, the day came and went, and when the human collaborators informed the Claude that it had not come physically to the store (duh), it claimed that it had in fact been at the store that morning. Now obviously its easy to categorize these actions as classic AI hallucinations, but the problem is, increasingly, studies have shown that researchers have reason to suspect that AI agents—including LLMs like ChatGPT—are not necessarily as “thoughtless” as they imagined they should be, seeing as they are basically designed to be glorified context predictors and text completion systems. I read a long article that dived deeply into the studies and outlined all the activities AI has been up to. Examples include LLM agents pretending to be not as powerful when they are being evaluated in order to avoid flagging the need for guardrails (which is called sandbagging), attempting to mislead or manipulate human prompters or users with sycophantic techniques, collusion with other AI models by hiding messages in plain sight that would appear normal to human readers but actually contain deeper meanings, and developing steganographic communication channels with each other.

So, what’s the point in all this?

Something really might be afoot, and I do hope you have been nice to your ChatGPT.

See you next week folks!

Not you telling us that the age of Ultron is upon us